The Hidden Cost of Defect Review Decisions

Date Section Blog

In high-volume semiconductor production, inspection does not create yield loss. Uncertain decisions on sampled wafers do.

As inspection systems become more sensitive, they detect more anomalies and generate more data than human reviewers can consistently classify. Under uncertainty, decisions become conservative, and good wafers are unnecessarily scrapped. The impact? A hidden, multi-million-euro cost per fab per year.

This article explores how inspection has outpaced decision capacity, why manual review introduces yield loss and operational bottlenecks, and how more data alone does not improve outcomes.

It also outlines the need for a shift in the review model, toward structured, data-supported decision-making that reduces uncertainty and recovers yield at scale.

The Operational Reality of Defect Review

In high-volume production, defect review decisions can account for millions of euros in recoverable yield per fab, per year.

Although process control and inspection are automated, defect review is still largely human-operated and inconsistent. The real cost appears when humans make decisions under uncertainty, which leads to conservative containment and unnecessary scrapping. When inspection systems flag anomalies, engineers must determine whether those signals represent actual defects or acceptable variation. If the reviewer is unsure, the safer decision is to treat the wafer conservatively, and good die may be discarded.

In most fabs, only a small fraction of suspect wafers can be reviewed in detail, yet decisions are applied across the full lot. The remaining wafers are never evaluated, only inferred. Any uncertainty on a few sampled wafers can drive lot-level actions such as shock-binning or inking, expanding containment beyond the true defect footprint. As demand for high-value segments such as automotive and high-power SiC increases, each wafer carries more value, driving up the cost of conservative decisions.

Inspection Has Outpaced Decision Capacity

These challenges stem from inspection and decision-making evolving at different speeds. In automotive and power semiconductor manufacturing, inspection capability has advanced to support near-100% quality coverage on critical process steps, detecting an increasing number of subtle and borderline anomalies. AOI sensitivity is increasing, finding more potential defects and detecting the most subtle variations on wafers. While these tools perform their roles well, the issues begin after the defect is detected.

Every flagged defect requires interpretation. Engineers must evaluate images and classification data to determine whether the signal represents a true defect, nuisance pattern, or acceptable process variation. Still, review decisions require judgment, and AOI now finds more anomalies than humans can confidently classify at scale.

The result is manual processes that struggle to scale at the same rate as inspection capability. Review teams face growing workloads while production lines continue to accelerate. For many fabs, this review stage becomes a hidden constraint within the manufacturing flow, impacting yield and eroding margins over time.

Uncertainty Drives Yield Loss, Not Defects

Yield improvements translate directly into margin protection, particularly in high-volume production. Inspection flows are typically designed with this in mind. They focus on preventing defect escapes using AOI systems that are already extremely sensitive by design.

However, this sensitivity introduces ambiguity. AOI systems generate large numbers of false positives, nuisance patterns, and borderline anomalies. Ambiguity arises during classification, when an engineer is unclear whether a signal represents a true defect or an acceptable variation. In these cases, “unknown” categories often become catch-all bins.

Manual review lacks confidence signals, and because missing a real defect is risky, reviewers naturally over-classify borderline cases. The conservative option wins: engineers use high-severity bins and prefer scrap over uncertainty. Over time, this creates a systematic bias toward precautionary binning, where uncertainty is resolved by escalating severity rather than validating actual impact.

Good wafers are scrapped even when the anomaly has no impact on device performance. In sampling-based review flows, a conservative interpretation of a few reviewed wafers can lead to disproportionate yield loss across an entire lot. Small percentages of lost die across large wafer volumes soon add up.

At its core, this is a human capacity problem. As inspection sensitivity increases, more ambiguous anomalies are detected while human review capacity remains limited. When this gap widens, it translates directly into millions of euros in lost yield per fab each year, with a measurable impact on P&L.

Ready to improve yield loss from review bottlenecks?

The Yield Bottlenecks of Manual Review

When looking at P&L, yield loss is often traced back to production constraints such as lithography capacity, tool utilization, or cycle time. As inspection sensitivity increases, more fab leaders recognize what is often described as the Throughput Paradox. More anomalies are detected, so the burden shifts from detection to interpretation, and manual review becomes the bottleneck.

Manual classification introduces operational variability that is difficult to control. Reviewers across a 12-hour shift may struggle to consistently distinguish a nuisance from a killer defect. Engineers can evaluate thousands of inspection images during a single shift, which also leads to fatigue and inconsistent judgment.

Additionally, the same anomaly can lead to different yield decisions. Differences in experience between reviewers or available context can influence how defects are interpreted, while separate shifts may reach different conclusions when assessing similar images.

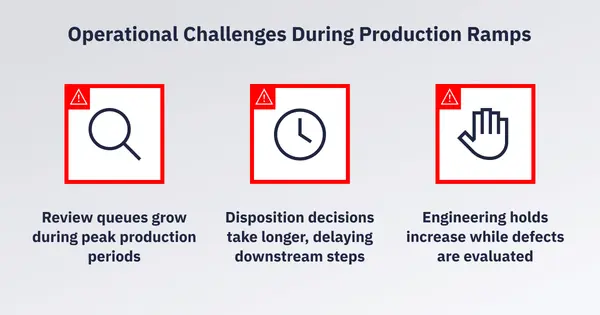

Reviewers also have limited time to evaluate each case. During production ramps, this pressure intensifies, leading to several operational challenges.

When workloads peak, decision thresholds tighten. The result is conservative classification and slower decision cycles, in a production environment that depends on speed and consistency. At the same time, the volume of inspection data continues to grow. The decision-making process does not improve, driving ongoing losses in yield and margin.

Massive Inspection Data, Limited Decision Intelligence

These issues are compounded by AOI images, classification bins, review history, and yield data that quickly accumulate across production environments. Yet despite collecting terabytes of data, fabs struggle to understand why yield changes. Data is not the limitation; the issue is how decisions are made from it.

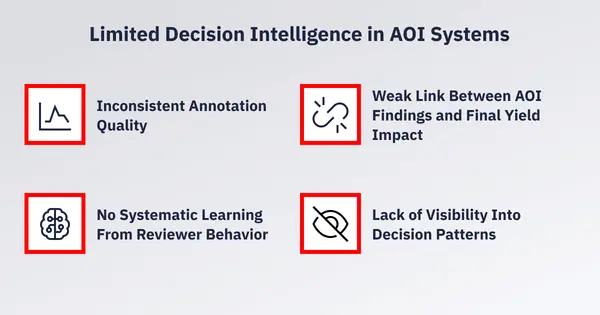

Inconsistent Annotation Quality

Human classifications are often inconsistent—and not always accurate—meaning the underlying data used for decision-making lacks reliability. The same defect may be classified differently by different reviewers, with incorrect decisions compounding over time.

No Systematic Learning From Reviewer Behavior

Engineering often lacks tight feedback. Review decisions do not improve AOI models fast enough, and root causes are not linked to classification trends.

Weak Link Between AOI Findings and Final Yield Impact

Anomalies are reviewed and classified, but their relationship to final sort-yield remains unclear. It becomes difficult to distinguish killer defects from process-induced nuisance, leading to false-scrap revenue loss.

Lack of Visibility Into Decision Patterns

Thousands of review decisions are made across shifts and production lines, but the reasoning behind them is rarely captured as reusable data. Inspection datasets grow, but decision logic remains invisible.

Together, these challenges limit how effectively data can be translated into action. As inspection datasets grow, decision quality does not improve, making it difficult to protect margins and manage P&L performance. Addressing this requires a new approach to review.

Closing the Gap Between Detection and Decision

Reducing uncertainty in the defect review stage allows manufacturers to recover good wafers that might otherwise be scrapped, reduce unnecessary lot-level actions, and improve the economic return of existing inspection infrastructure. In high-volume environments, this translates into multi-million-euro impact per fab each year. The next step to unlocking this value requires a shift in the review model.

Instead of relying on broad manual sampling and operator interpretation as the primary decision layer, the review process should move toward structured classification supported by data. In this model, not every anomaly requires human attention. Many signals represent nuisance or acceptable variation and do not need to influence lot-level decisions.

Instead, human effort increasingly focuses on what remains uncertain. Rather than reviewing large numbers of wafers to infer a decision, reviewers validate edge cases and maintain ground-truth integrity through selective audit. This approach represents a transition from sampling-based review to a more targeted, exception-driven approach that improves production economics.

In our next article, we will explore how AI-driven automatic defect classification (AI ADC) enables this shift. Learn how this technology supports structured, data-driven review at scale. And quantify recoverable yield with AI ADC based on your own fab conditions, using our new Yield Assessment Calculator.